Visionary architecture for location anomaly suppression thresholds in global merchant onboarding

Embracing Predictive Compliance: Location Anomaly Suppression Architecture

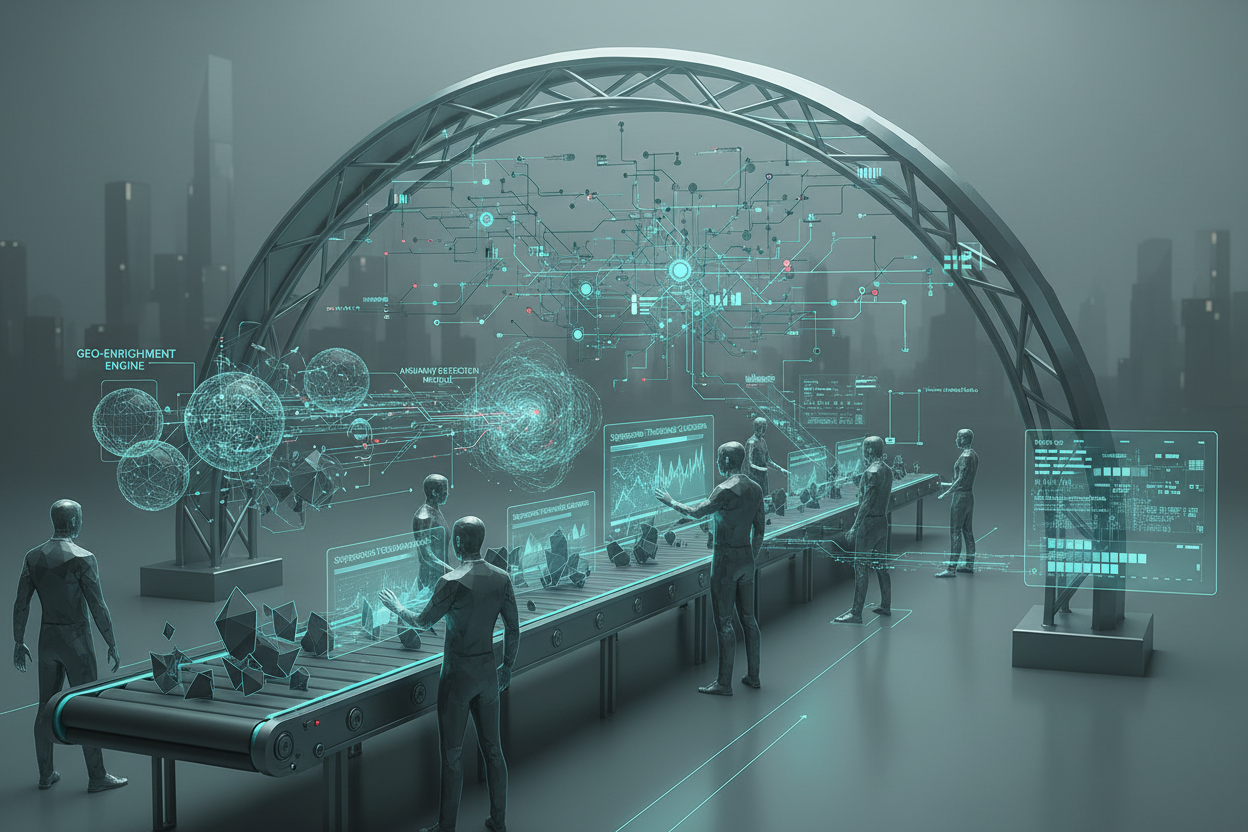

The future of merchant acquiring risk control hinges on proactive, data-driven compliance. A key aspect of this evolution is the intelligent management of location anomalies during cross-border merchant onboarding. Suppressing irrelevant alerts without compromising security requires a visionary architecture that anticipates threats and adapts to evolving risk landscapes. Forget reactive measures; we're building a system that predicts emerging fraud patterns.

Core Components of a Predictive Anomaly Suppression System

At the heart of this architecture lies a multi-layered system designed for precision and scalability. Consider these key components:

- Geo-Enrichment Engine: Enriches merchant data with location intelligence, providing a contextual understanding of the merchant's operational environment. This is not just about mapping IP addresses; it's about understanding local business regulations, common fraud schemes, and regional risk factors. Think beyond simple data points; envision a rich tapestry of location-based insights.

- Anomaly Detection Module: This module leverages machine learning algorithms to identify deviations from expected location patterns. It moves beyond simple rule-based systems to recognize subtle anomalies indicative of fraudulent activity. Crucially, it needs to be adaptable to different merchant types and geographic regions.

- Suppression Threshold Calibration: The intelligent engine that adjusts anomaly suppression thresholds dynamically based on risk scoring, merchant history, and external threat intelligence. This is where the 'suppression' happens, but it's not blind suppression; it's surgically precise risk mitigation.

- Decision Log & Audit Trail: A tamper-proof record of all suppression decisions, facilitating compliance and enabling continuous improvement of the anomaly detection model. This is non-negotiable. Every suppression decision must be auditable, providing a clear lineage of how and why the decision was made

Optimized Data Pipelines for Location Anomaly Scoring at Scale

The effectiveness of your anomaly suppression hinges on the robustness and efficiency of your data pipelines. Speed and accuracy are paramount, especially during peak onboarding periods. Let's imagine a scenario: a flash sale triggers a surge in merchant registrations across multiple continents. Can your system handle the load and still maintain compliance integrity?

Building a High-Performance Data Pipeline

Consider this reference flow:

- Data Ingestion: Real-time ingestion of merchant application data, enriched with GeoIP data and other relevant location signals, e.g. payment processor origin.

- Feature Extraction: Feature extraction from raw data to create a feature vector representing the merchant's location profile. This vector includes elements such as historical location consistency, proximity to known fraudulent locations, and alignment with stated business operations.

- Anomaly Scoring: The anomaly detection module calculates an anomaly score based on the feature vector. This score reflects the degree to which the merchant's location profile deviates from expected norms.

- Suppression Threshold Evaluation: The calibrated suppression threshold is applied to the anomaly score. If the score falls below the threshold, the anomaly is suppressed.

- Decision Logging: Regardless of whether the anomaly is suppressed or not, the decision, along with all relevant data and metadata, is logged in the audit trail, providing a complete provenance of the decision.

This pipeline should be designed for horizontal scalability, capable of handling massive data volumes with low latency.

Addressing Failure Modes and Edge Cases in Location Checks

Even the most sophisticated anomaly detection systems are vulnerable to failure. Understanding potential failure modes is crucial for building a resilient and reliable platform. Let's consider some key edge cases:

Common Failure Scenarios and Mitigation Strategies

- Data Quality Issues: Inaccurate or incomplete location data can lead to false positives or false negatives. Implement rigorous data validation and cleansing processes, including automated data quality checks and manual reviews.

- Model Drift: The anomaly detection model may become less accurate over time as fraud patterns evolve. Implement continuous model monitoring and retraining processes to ensure the model remains up-to-date and effective.

- Edge Case Over-Suppression: Suppression thresholds set too high can miss legitimate anomalies. Implement a feedback loop whereby risk analysts review suppressed anomalies, providing input to fine-tune the suppression thresholds.

- Geopolitical Events Sudden shifts can invalidate geolocation data. The system should dynamically adapt.

Hardening Tactics for Location Anomaly Thresholds: Enhancing Compliance Confidence

While automation is key, a human-in-the-loop approach is critical for high-stakes decisions. We integrate human expertise into the decision-making process, particularly for borderline cases. This involves presenting risk analysts with a clear and concise summary of the anomaly, along with supporting evidence, allowing them to make informed decisions.

Checklist: Strengthening Your Anomaly Suppression Logic

- Implement Role-Based Access Control (RBAC): Restrict access to sensitive data and configuration settings to authorized personnel only.

- Regular Security Audits: Conduct regular security audits to identify and address potential vulnerabilities.

- Data Encryption: Encrypt sensitive data at rest and in transit to protect it from unauthorized access.

- Incident Response Plan: Develop and implement an incident response plan to handle security breaches and data leaks.

- Monitor GeoIP Providers: Confirm that the providers undergo yearly SOC2 audits, and review the audits yourselves.

Data Lineage Map and Audit Trail Requirements

Maintaining a thorough audit trail is vital for demonstrating compliance to regulators and stakeholders. Your decision log should include at a minimum:

- Timestamp of the anomaly detection and suppression decision.

- Merchant identifier.

- Anomaly score.

- Suppression threshold used.

- Justification for the suppression decision (e.g., rule-based exception, manual approval).

- User responsible for the suppression decision (if applicable).

A detailed data lineage map should illustrate the flow of data from its source to the final decision, documenting all transformations and enrichments along the way. Visualizing the complete data flow from raw input to final risk assessment is key.

Measurable Outcomes: SLA Adherence & Confidence Gains

The ultimate goal is to improve SLA adherence, reduce false positives, and increase overall confidence in your compliance decisions. Your metrics here are your North Star.

Strategic Metrics

- Reduction in False Positives: Track the percentage of anomalies that are incorrectly flagged as suspicious.

- Improved SLA Adherence: Monitor the time taken to onboard new merchants while maintaining compliance.

- Increased Compliance Confidence: Measure the level of confidence in compliance decisions through surveys and audits.

- Decrease in Fraudulent Transactions: Track the number of fraudulent transactions originating from newly onboarded merchants.

- Streamlined Investigations Mean Time To Investigate (MTTI): Reduce time spent by fraud investigation teams.

By implementing this visionary architecture and continuously monitoring these metrics, you can transform your merchant acquiring risk control platform from a reactive system to a proactive strategic asset.

Ready to explore practical examples of this architecture in action? See how we've helped other organizations improve their compliance frameworks with effective location anomaly suppression, and access data lineage map templates: Data Lineage Maps for Geolocation Compliance

Try It In Your Product

Ready to apply this pattern? Start with a free API test, issue your key, and proceed to docs.

Fine-Tuning Anomaly Thresholds: A Practical Guide

Calibrating anomaly suppression thresholds is not a one-time task but an iterative process. The ideal threshold should strike a balance between minimizing false positives and false negatives,adapting to evolving fraud patterns, and shifting business needs.

Steps to Calibrate Anomaly Suppression Thresholds

- Establish Baseline Performance: Before making any adjustments, establish a baseline for your current anomaly detection system. Track key metrics such as false positive rate, false negative rate, and SLA adherence.

- Segment Your Merchant Population: Different types of merchants may exhibit different location anomaly patterns. Segment your merchant population based on factors such as industry, geographic region, and transaction volume.

- Analyze Anomaly Distributions: Analyze the distribution of anomaly scores for each merchant segment. Look for patterns and outliers that may indicate the need for different suppression thresholds.

- Experiment with Threshold Adjustments: Start by making small adjustments to the suppression thresholds for a subset of merchants. Monitor the impact of these adjustments on key metrics.

- Implement A/B Testing: Run A/B tests to compare the performance of different suppression thresholds. This allows you to quantitatively measure the impact of different thresholds on key metrics.

- Gather Feedback from Risk Analysts: Solicit feedback from risk analysts who are responsible for reviewing anomalies. They can provide valuable insights into the effectiveness of the suppression thresholds.

- Iterate and Refine: Continuously iterate and refine your suppression thresholds based on data analysis, A/B testing results, and feedback from risk analysts.

- Automate the Threshold Tuning: As you gain more experience, consider using approaches to automate the process of tuning the suppression thresholds.

- Document Your Process: Keep a record of all changes made to your suppression thresholds, along with the rationale for those changes.

Anti-Patterns in Threshold Management

- Static Thresholds: Using static thresholds that are not dynamically adjusted to changing fraud patterns and business conditions.

- One-Size-Fits-All Approach: Applying the same suppression thresholds to all merchants, regardless of their risk profile.

- Ignoring Feedback: Failing to solicit and act on feedback from risk analysts and other stakeholders.

- Lack of Monitoring: Not continuously monitoring the performance of the anomaly detection system and the impact of suppression thresholds.

- Over-reliance on Automation: Automating the threshold tuning process without proper human oversight and validation.

Integrating Real-World Context

Consider the impact of real-world events on location data. Anomaly detection models must be able to handle scenarios such as:

- Public Holidays: Business activity might shift during major holidays. Your model needs to account for this.

- Natural Disasters: A hurricane or earthquake can temporarily displace businesses and individuals. Anomaly detection should adapt to these situations, avoiding falsely flagging legitimate movements as suspicious.

- Political Unrest: Protests and civil unrest can disrupt business operations and lead to unusual location patterns.

For example, a sudden spike in transactions originating from a normally quiet location might be flagged as anomalous. However, if that location is hosting a major sporting event or music festival, the increased activity is likely legitimate. Integrating external data sources, such as event calendars and news feeds, can provide valuable context for interpreting location anomalies.

The Importance of Communication and Collaboration

Building and maintaining an effective location anomaly suppression system requires strong communication and collaboration between different teams:

- Data Science: Responsible for developing and maintaining the anomaly detection model.

- Engineering: Responsible for building and operating the data pipelines and infrastructure that support the anomaly detection system.

- Risk Management: Responsible for defining the risk policies and suppression thresholds.

- Fraud Investigation: Responsible for investigating and resolving anomalies.

- Compliance: Enforces regulatory needs and conducts audits.

Regular communication and collaboration between these teams are essential for ensuring that the anomaly detection system is aligned with business needs and effectively mitigating risk. This includes:

- Sharing insights and feedback: Data scientists should share insights from their analysis of anomaly data with risk managers and fraud investigators.

- Defining clear roles and responsibilities: Everyone should understand who is responsible for what.

- Establishing clear communication channels: Create channels for reporting issues and sharing information.

- Conducting regular meetings: Hold regular meetings to discuss the performance of the anomaly detection system and address any issues.

Data Enrichment Strategies: Enhancing Location Intelligence

The accuracy and effectiveness of location anomaly detection can be significantly improved by enriching location data with additional information. Consider these data enrichment strategies:

- IP Address Geolocation: Supplementing GPS data with IP address geolocation can provide additional insights into the location of merchants, especially for online transactions.

- Business Registration Data: Cross-referencing location data with business registration databases can help verify the legitimacy of merchants and identify potential shell corporations.

- Social Media Data: Analyzing social media activity associated with a merchant can provide valuable context about their business operations and physical presence.

- Device Fingerprinting: Device fingerprinting can help identify merchants who are attempting to mask their true location using virtual private networks (VPNs) or proxy servers.

- Transaction History: Analyzing a merchant's transaction history can reveal patterns and anomalies that may indicate fraudulent activity.

It is worth noting that data enrichment comes with its own set of challenges such as privacy considerations, and cost. Ensure you've assessed the tradeoffs.

Evolving Threat Landscape and Model Maintenance

The threat landscape is constantly evolving, and fraud actors are always developing new techniques to evade detection. The anomaly detection model must be regularly retrained with new data to keep it up-to-date and effective.

Model Retraining Strategies

- Continuous Learning: Implement a continuous learning pipeline that automatically retrains the model with new data on a regular basis.

- Scheduled Retraining: Schedule regular retraining sessions to incorporate new data and address model drift.

- Event-Triggered Retraining: Trigger retraining sessions when significant changes are detected in the data distribution or when new fraud patterns are identified.

- Adversarial Training: Use adversarial training techniques to make the model more robust to adversarial attacks.

In addition to retraining the model, it's important to regularly evaluate its performance and identify areas for improvement. This includes:

- Monitoring Key Metrics: Track key metrics such as false positive rate, false negative rate, and detection rate.

- Conducting Root Cause Analysis: Conduct root cause analysis to identify the underlying causes of errors and biases in the model.

- Experimenting with New Features: Experiment with new features and data sources to improve the accuracy and robustness of the model.

Next step

Run a quick API test, issue your key, and integrate from docs.